In vSphere 5 a new feature was added that many people may be overlooking. It is called Multi-NIC vMotion. If you have a environment where you have 2 or more dedicated uplinks reserved for vMotion you can double the total bandwidth available by using this option. Do I have your attention? 🙂

Normally you would have 1 port group with both of your vMotion uplinks set to active like this:

What if I told you when you are vMotioning a VM, the VMkernal is picking one of those uplinks and basicly ignoring the other? According to the VMware KB article 2010877, “The VMkernel TCP/IP stack uses a single routing table to route traffic. If you have multiple VMkernel network interfaces (vmknics) that belong to the same IP subnet, the VMkernel TCP/IP stack picks one of the interfaces for all outgoing traffic on that subnet as dictated by the routing table.” If you want to use both uplinks for vMotion traffic and double your total bandwidth you have to create two vMotion VMkernals and assign each one a uplink. There was some issues with multi-NIC vMotions if you were running ESXi version before 5.0U2. As always try this out in your test enviroments first 🙂

Here is VMware KB2007467 walking you through these steps that compliment my steps below.

First we will create a new port group and rename the current vMotion port group to show them apart. In my case I will name one “vMotion-uplink3” and the other “vMotion-uplink4”.

Now right click a port group, go to Edit Settings, then click on Teaming and Failover. You will take one of the uplinks and move it to standby so you do not loose redundancy. Do the same thing for the second vMotion port group but flip the uplinks. See screen shots below:

Now the port groups are set for the next step.

Go to Hosts and Clusters, click on a host, click on Configuration tab -> Networking -> vDS -> and Manage Virtual Adapters.

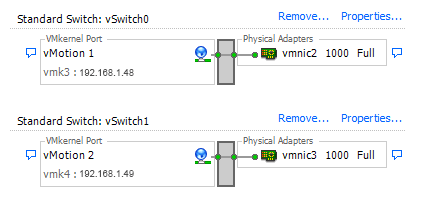

I have only 1 vMotion VMkernal configured using vMotion-uplink3. I want to add another VMkernal set for vMotion that will use vMotion-uplink4. Click Add:

Chose New virtual adapter and click Next:

Click Next:

Select the new vMotion port group and check the vMotion checkbox. Click Next:

Give this new vMotion kernal a new, unused IP address, click Next:

Now click Finish:

That is it. You have have multi-NIC vMotion configured. You will have to do this for each host that you want to enable this on. Put a host in maintenance mode and see if you get a increase in speed. You can also turn on jumbo frames if your switch supports it for a further speed increase! In our test environment that has two 1 GB vMotion uplinks, it reduced the time it took for a host to enter maintence mode from 40 minutes down to low/mid 20’s. Please leave a comment and let me know your results!!