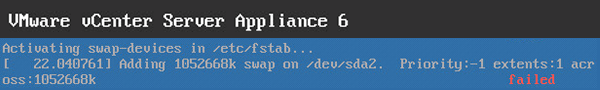

This past weekend I decided to do some rewiring of my home lab and accidentally pulled the power to the host that my VCSA was running on. While booting my VCSA 6 was booting back up I received the following error:

|

1 2 |

fsck failed. Please repair manually and reboot. The root file system is currently mounted ready-only. To remount it read-write do: bash# mount -n -o remount,rw |